Deep Learning Projects

Top 100 Deep Learning Projects for Engineering Students In 2022-2023

Deep Learning Project Ideas

With the advancement new technologies in Artificial intelligence the scope of Deep Learning is playing an important role in solving multiple problems and is being applied in various domains. This technology aims to imitate the biological neural network, that is, of the human brain. For Engineering students, the Practical approach will play a more vital role than theoretical knowledge ,we have an extensive list of multiple projects which cover all application areas in a real time scenario. Let us explore some real-time project ideas with very interesting deep learning project ideas which beginners and professional can work on to put their knowledge to the test and further help to get good hands-on experience in deep learning.

Deep learning is a division of Machine Learning, Deep Learning leverages artificial neural networks arranged hierarchically to perform specific ML tasks. Deep Learning networks use the unsupervised learning method – they learn from unstructured or unlabeled data. Artificial neural networks are just like the human brain, with neuron nodes interconnected to form a web-like structure.Deep learning projects and examples in real life:

As new advances are being made in this domain, The more deep learning project ideas you try, the more experience you gain.

-

Self Driving Cars

-

News Aggregation and Fraud News Detection

-

Natural Language Processing

-

Virtual Assistants

-

Entertainment

-

Visual Recognition

-

Fraud Detection

-

Healthcare

-

Personalization's

-

Detecting Developmental Delay in Children

-

Colorization of Black and White images

-

Adding sounds to silent movies

-

Automatic Machine Translation

-

Automatic Handwriting Generation

-

Automatic Game Playing

-

Language Translations

-

Pixel Restoration

-

Photo Descriptions

-

Demographic and Election Predictions

-

Natural language processing, computer vision, bioinformatics

-

Speech recognition, audio recognition, machine translation, social network filtering.

Advanced Deep Learning Project Ideas

01. Person age & gender recognition using deep learning techniques.

Today’s smartphone cameras have incorporate AI. They are even capable of determining a person's gender and age. Deep learning can be used for this, but we'll need a lot of data to build the model for detection of age and gender.

02. Slumped Driving Detection of a driver using deep learning model.

Napping of a driver can be determined using driver drowsiness model built by deep learning technique. This model when put into practise for drivers will help to avoid accidents.

03. Pose estimation for people

The art of determining a person's body alignment by calculating various body joints is known as human pose estimate. In order to apply a filter to a person, Snapchat uses posture estimation to determine where the individual's head and eyes are situated to fix a filter. Similar to this, we can determine a person's stance and apply filters to them in real time.

04. computer vision technique for viability of quantifying the treatment for stroke patients a deep neural network approach

Training in limb rehabilitation speeds up the recovery process and enhances quality of life for stroke patients with hemi paralysis. Both doctors and patients must be aware of the patient's progress in rehabilitation. The computer vision method, which can identify a patient's training action, movement trajectory, and activity status, can be used to monitor rehabilitation more precisely and effectively than wearable sensors or deep cameras. In the clinic, it is difficult to quantify the dynamic change of different training sessions to assess the progress of the rehabilitation, with the exception of static measures of real-time behaviour.

In this study, we suggested a computational method to compare the upper limbs' motion change. The upper limb joint points were first identified using OpenPose to preprocess the video data, and the positions of each joint point were then specified using Cartesian coordinates. Second, in order to determine the rehabilitation progress, we computed the similarity of the limb's lift angle and time in various training periods using the dynamic time warping algorithm. The outcomes demonstrate that our approach can measure data and analyse the effectiveness of rehabilitative actions using a basic monocular camera, which has a promising future for use.

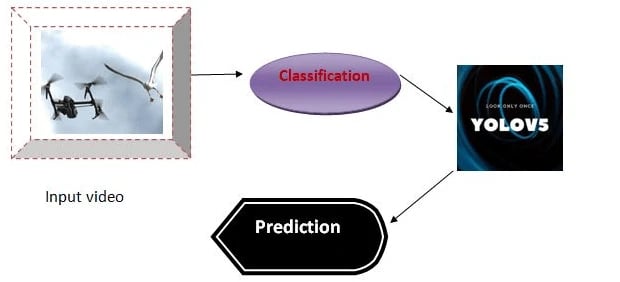

05. Yolo V4 & Yolo V5 approach for recognition of bird and drone.

Due to their popularity and rising prevalence, it is now difficult to spot drones, so we built this project to identify them. In addition to their beneficial uses, their potential for use in malevolent actions has given rise to concerns about physical infrastructure security, safety, and surveillance at airports. There have been numerous complaints in recent months of different types of drones being used improperly at airports, which has disrupted airline operations and left some people unable to locate the drones or birds.

To effectively recognise and identify two different types of drones and birds, we implement this project utilising a deep learning-based method. Comparing the suggested method to existing detection systems, evaluation of the proposed methodology using the generated image dataset shows greater efficiency. Additionally, because to their similar physical characteristics and behaviours, drones and birds are frequently mistaken for one another. The suggested method is not only capable of determining if drones are present or absent in a given area, but it can also identify worrisome and differentiate between two different types of drones and tell them apart from birds.

06. RESNET50, GoogleNet, Squeezenet, and AlexNet for the diagnosis of grape fruit illness.

Due to the constantly changing climatic and environmental factors, illnesses are relatively common in crops. Crop diseases are typically difficult to control and have an impact on the growth and output of the crops. Accurate illness detection and timely disease control measures are essential for ensuring high production and good quality. The widely cultivated grape plant in India is susceptible to various diseases that can harm the fruit, stem, and leaves. Fruit diseases that are the early signs of sour rot, powdery mildew, healthy grapes, grey mould, and healthy grapes. Therefore, it is necessary to create an automatic system that can be utilised to identify the different types of diseases and offer relevant treatments.

07. Classification of Brain Tumors Using a Highly Accurate Attention-Based Convolutional Neural Network

One of the most frequent tumours that poses a threat to human life safety has always been brain tumours. Currently, China still has a dearth of computer-aided diagnostic tools designed particularly to identify particular brain tumour situations and conduct relevant research. Brain magnetic resonance imaging (MRI) datasets that are publically accessible were gathered for this work, and data preparation like normalisation was applied to them.

This study provided a method of merging attention mechanism with Convolutional Neural Network (CNN) to lessen the influence caused by irrelevant background information features in images, taking into account the peculiarities of medical image complexity of brain MRI. The experiment's findings using the suggested methodology were contrasted with self-described traditional models like VGGNet and MobileNet.

Testing on the dataset reveals that the CNN model performs significantly better on the brain tumour recognition classification task than the other three models in terms of accuracy, indicating that the attention mechanism in the model can somewhat mitigate the impact of context irrelevant information on the classification outcome. The online website where the trained analytical model is deployed in this work also has an easy-to-use interface that is practical for medical staff to use.

08. System for Detecting Sitting Posture Based on the Keras Framework

A real-time sitting posture detection system based on deep learning is built with a low-power embedded real-time sitting posture detection system in mind. A thin-film pressure sensor is used in the system to measure the human body's sitting posture pressure. The system then collects and analyses data on human body pressure in various sitting positions and creates an analytical model using the Keras framework.

Burn the model onto the STM32 using cubemax to provide real-time data collecting, processing, and sitting position identification. Finally, the MQTT protocol, which enables the real-time detection and classification of the sitting position and provides the pertinent sitting posture correction cues, accomplishes communication between the STM32 and the Android application.

09. SE-ResNeXt50 model to identify and control strawberry diseases and pests.

Since there aren't many high-quality open image datasets available at this time, studies on strawberry disease and pest diagnosis and control are rare. In light of this, initially, online and offline independent collections of 13 types of frequent strawberry pests and diseases were made in order to create databases. Second, the SE-ResNeXt50 model was developed, which was more practical than the ResNet50 residual network model.

To be more precise, the Inception model was combined with the ResNet50 model to widen the network, 32 branches were set, and the attention mechanism, the squeeze and excitation module (SE), was also imported. This solution to the complex image background and information interference problems improved the model's identification efficiency and accuracy. According to the findings, the SE-ResNeXt50 model's accuracy, which reached 89.3%, was 8% greater than the ResNet50 model's. After 15 iterations, the SEResNeXt50 model reached a plateau, demonstrating its successful identification.

Additionally, the SEResNeXt50 model, which was created using data collected in the actual world, has high resilience and generalisation capabilities, better addressing the needs of strawberry growers. Based on the SE-ResNeXt50 model, a WeChat mini-program for strawberry disease and pest identification was created, allowing fruit growers to quickly identify strawberry pests and illnesses and receive prevention recommendations, supporting the growth of the strawberry sector.

10. Technology for detecting IoT intrusions based on deep learning

A network intrusion detection model including convolutional neural networks and gated recurrent units is presented to solve the issue of low accuracy of existing network intrusion detection models for multi-classification of intrusion behaviours and redundancy of data characteristics. The random forest algorithm and Pearson correlation analysis are used to solve the issue of feature redundancy.

Next, the temporal features of the data are extracted using TCN and GRU, while attention module is introduced to assign different weights to the features, thereby reducing overhead and enhancing model performance. Finally, the classification problem is solved using the Softmax function. In this study, a model with a 99.99% accuracy is evaluated on the Bot-Lot dataset.

11. Enhanced particle swarm optimization technique and neural network for human fall detection.

Fall detection has become a widespread worry in the area of public safety as the world's population ages. Elderly fall injuries can be effectively reduced by quickly and precisely identifying falling behaviours in surveillance cameras and promptly dispatching rescue signals.

For real-time fall detection in indoor situations, this research suggests a hybrid approach based on an enhanced particle swarm optimization technique and a neural network. Alpha pose is used to extract human key points from video frames, and an upgraded particle swarm optimization neural network model is used to classify the human keypoints in real time. According to experimental findings, this technique may accurately identify falling actions in interior scenes.

12. Deep Convolutional Generative Adversarial Networks to Create Human Faces.

They are incredibly potent methods for producing sights, sounds, texts, or films that are identical to actual data. The goal of this research is to create realistic-looking human faces from scratch by starting with random noise and using Deep Convolutional Generative Adversarial Networks.

13. Differential Autoencoders

The potential for VAE, or variational autoencoder, in deep learning is enormous. They are able to create fresh data that is comparable to the training data. They come equipped with both an encoder and a decoder. You can start by generating numbers using the MNIST dataset. Variational Autoencoders

14. Photos colouring of old and black and white images using Deep CNN

The goal of this project is to create a model that can convert vintage black-and-white photos into colourful ones. While it used to take digital painters many hours to colour a picture, deep learning today makes it feasible to colour an image in only a few seconds.

15. Translator of languages

While it takes humans almost a year to learn a language, computers can do it in just a day. We can create a language translator app for this project that can translate between English and to other languages including regional languages.

16. Cucumber Disease detection in Image Recognition Using a New Attention Module

Deep convolutional neural networks are a significant advancement in the world of computers, but they need a lot of labelled data for model training. In the agricultural industry, it is challenging to create a sizable imaging record of crop disease. In this study, we offer a channel and position block-based solution to this issue (called a CPB module). The CPB module can infer attention maps from an intermediate feature map in addition to the channel and position.

The input feature maps for adaptive feature refinement can then be created by multiplying the attention maps. Using our own crop disease image dataset, we conducted rigorous tests to verify the CPB module's efficacy. Class activation map (CAM) display characteristics were used in the trials. The outcomes demonstrated that the CPB module is capable of accurately classifying images of crop diseases.

17. NB-IoT technology for water quality monitoring system

The STM32F103 microcontroller and Cloud IoT platform, based on narrowband Internet of Things technology, are used in this research to design a water quality monitoring system that addresses the issues of complexity and low efficiency of conventional water quality monitoring methods.

This device can continuously gather information on the target's automatically monitored waters' temperature, pH, TDS, and ORP levels. Real-time data can be automatically uploaded to the cloud platform, and users can access real-time monitoring data on the CLOUD IoT platform. This innovation and application value overcomes the drawbacks of conventional water quality monitoring systems, such as lengthy data collection cycles and subpar real-time performance.

18. Facial appearance method for recognising personalities

In this study, we offer an automatic personality assessment approach using portrait photos that is motivated by psychology research that shows that a person's facial appearance can be seen as the important clue in judging their personality. Crowd-sourced data was used to create the CelebAMask-HQ-Personality face-personality dataset. This dataset includes of annotated personality traits, facial geometric attributes, and facial photos.

By removing hidden ones, the CelebAMask-HQ facial photos are improved. Under the direction of psychological research, we build geometric characteristics using face landmark information. Volunteers use the Ten-Item Personality Inventory to collect the necessary number of personality annotations (TIPI). Then, utilising a few sample classifiers, such as multi-layer perceptrons, random forests, support vector machines, and k-nearest neighbours, etc., the relationships between face features and personality are investigated. According to the results of experiments, it is possible to determine a person's personality from their facial features.

The corona virus COVID-19 pandemic is causing a global health crisis so the effective protection methods is wearing a face mask in public areas according to the World Health Organization (WHO). The COVID-19 pandemic forced governments across the world to impose lockdowns to prevent virus transmissions. Reports indicate that wearing facemasks while at work clearly reduces the risk of transmission. An efficient and economic approach of using AI to create a safe environment in a manufacturing setup. A hybrid model using deep and classical machine learning for face mask detection will be presented. A face mask detection dataset consists of with mask and without mask images , we are going to use OpenCV to do real-time face detection from a live stream via our webcam. We will use the dataset to build a COVID-19 face mask detector with computer vision using Python, OpenCV, and Tensor Flow and Keras. Our goal is to identify whether the person on image/video stream is wearing a face mask or not with the help of computer vision and deep learning.

Assisting to the people with visual, hearing, vocal impairment through the modern system is a challenging job. Nowadays researchers are focusing to address the issues of one of the impairment but not all at once. This work is mainly performed to find the unique solution/ technique to assist communication for the people with visual, hearing, vocal impairment. This system helps the impaired people to have the communication with each other and also with the normal person. The main part of the work is Raspberrypi on which all the activities are carried out. The work provide the assistance to visually impaired person by letting them to hear what is present in the text format. The stored text format is spoke out by the speaker. For the people with hearing impairment the audio signals are converted into text format by using speech to text conversion technique. This is done with the help of AMR voice app which makes them to understand what the person says can be displayed as the text message . And for people with vocal impairment, their words are conveyed by the help of speaker

Facial recognition is a category of biometric software which works by matching the facial features. We will be studying the implementation of various algorithms in the field of secure voting methodology. There are three levels of verification which were used for the voters in our proposed system. The first is UID verification, second is for the voter card number, and the third level of verification includes the use of various algorithms for facial recognition.

The road accidents have increased significantly. One of the major reasons for these accidents, as reported is driver fatigue. Due to continuous and longtime driving, the driver gets exhausted and drowsy which may lead to an accident. Therefore, there is a need for a system to measure the fatigue level of driver and alert him when he/she feels drowsy to avoid accidents. Thus, we propose a system which comprises of a camera installed on the car dashboard. The camera detects the driver’s face and tracks its activity. From the driver’s face, the system observes the alteration in its facial features and uses these features to observe the fatigue level. Facial features include eyes (fast blinking or heavy eyes) and mouth (yawn detection). Principle Component Analysis (PCA) is thus implemented to reduce the features while minimizing the amount of information lost. The parameters thus obtained are rocessed through Support Vector Classifier (SVC) for classifying the fatigue level. After that classifier output is sent to the alert unit.

In this research work, we explore the vehicle detection technique that can be used for traffic surveillance systems. This system works with the integration of CCTV cameras for detecting the cars. Initial step will always be car object detection. Haar Cascades are used for detection of car in the footage. Viola Jones Algorithm is used in training these cascade classifiers. We modify it to find unique objects in the video, by tracking each car in a selected region of interest. This is one of the fastest methods to correctly identify, track and count a car object with accuracy up to 78 percent.

Human activities recognition has become a groundwork area of great interest because it has many significant and futuristic applications; including automated surveillance, Automated Vehicles, language interpretation and human computer interfaces (HCI). In recent time an exhaustive and in depth research has been done and progress has been made in this area. The idea of the proposed system is a system which can be used for surveillance and monitoring applications. This paper presents a part of newer Human activity/interaction recognition onto human skeletal poses for video surveillance using one stationary camera for the recorded video data set. The traditional surveillance cameras system requires humans to monitor the surveillance cameras for 24*7 which is oddly inefficient and expensive. Therefore, this research paper will provide the mandatory motivation for recognizing human action effectively in real-time (future work). This paper focuses on recognition of simple activity like walk, run, sit, stand by using image processing techniques.

Traffic sign recognition system (TSRS) is a significant portion of intelligent transportation system (ITS). Being able to identify traffic signs accurately and effectively can improve the driving safety. This paper brings forward a traffic sign recognition technique on the strength of deep learning, which mainly aims at the detection and classification of circular signs using open cv. Firstly, an image is preprocessed to highlight important information. Secondly, Hough Transform is used for detecting and locating areas. Finally, the detected road traffic signs are classified based on deep learning. In this article, a traffic sign detection and identification method on account of the image processing is proposed, which is combined with convolutional neural network (CNN) to sort traffic signs. On account of its high recognition rate, CNN can be used to realize various computer vision tasks. TensorFlow is used to implement CNN. In the German data sets, we are able to identify the circular symbol with more than 98.2% accuracy.

The paper manages the reason for affirmation of vehicle speed subject to data from video record. In hypothetical part we portray the most vital procedures, to be unequivocal Gaussian blend models, DBSCAN, Kalman channel, Optical stream. The execution part is incorporated the planning game plan and the delineation of procedures for correspondence of individual areas. The end contains the primer of picked up video records utilizing various vehicles, various natures of driving and the vehicle position at the time of chronicle.

Internet has become one of the basic amenities for day-to-day living. Every human being is widely accessing the knowledge and information through internet. However, blind people face difficulties in accessing these text materials, also in using any service provided through internet. The advancement in computer based accessible systems has opened up many avenues for the visually impaired across the globe in a wide way. Audio feedback based virtual environment like, the screen readers have helped Blind people to access internet applications immensely. We describe the Voicemail system architecture that can be used by a Blind person to access e-Mails easily and efficiently. The contribution made by this research has enabled the Blind people to send and receive voice based e-Mail messages in their native language with the help of a computer.

The human face plays an important role in knowing an individual's mood. The required input are extracted from the human face directly using a camera. One of the applications of this input can be for extracting the information to deduce the mood of an individual. This data can then be used to get a list of songs that comply with the “mood” derived from the input provided earlier. This eliminates the time-consuming and tedious task of manually Segregating or grouping songs into different lists and helps in generating an appropriate playlist based on an individual's emotional features. Facial Expression Based Music Player aims at scanning and interpreting the data and accordingly creating a playlist based the parameters provided. Thus our proposed system focus on detecting human emotions for developing emotion based music player, which are the approaches used by available music players to detect emotions, which approach our music player follows to detect human emotions and how it is better to use our system for emotion detection. A brief idea about our systems working, playlist generation and emotion classification is given.

This paper presents facial detection and emotion analysis software developed by and for secondary students and teachers. The goal is to provide a tool that reduces the time teachers spend taking attendance while also collecting data that improves teaching practices. Disturbing current trends regarding school shootings motivated the inclusion of emotion recognition so that teachers are able to better monitor students’ emotional states over time. This will be accomplished by providing teachers with early warning notifications when a student significantly deviates in a negative way from their characteristic emotional profile. This project was designed to save teachers time, help teachers better address student mental health needs, and motivate students and teachers to learn more computer science, computer vision, and machine learning as they use and modify the code in their own classrooms. Important takeaways from initial test results are that increasing training images increases the accuracy of the recognition software, and the farther away a face is from the camera, the higher the chances are that the face will be incorrectly recognized. The software tool is available for download at https://github.com/ferrabacus/Digital-Class